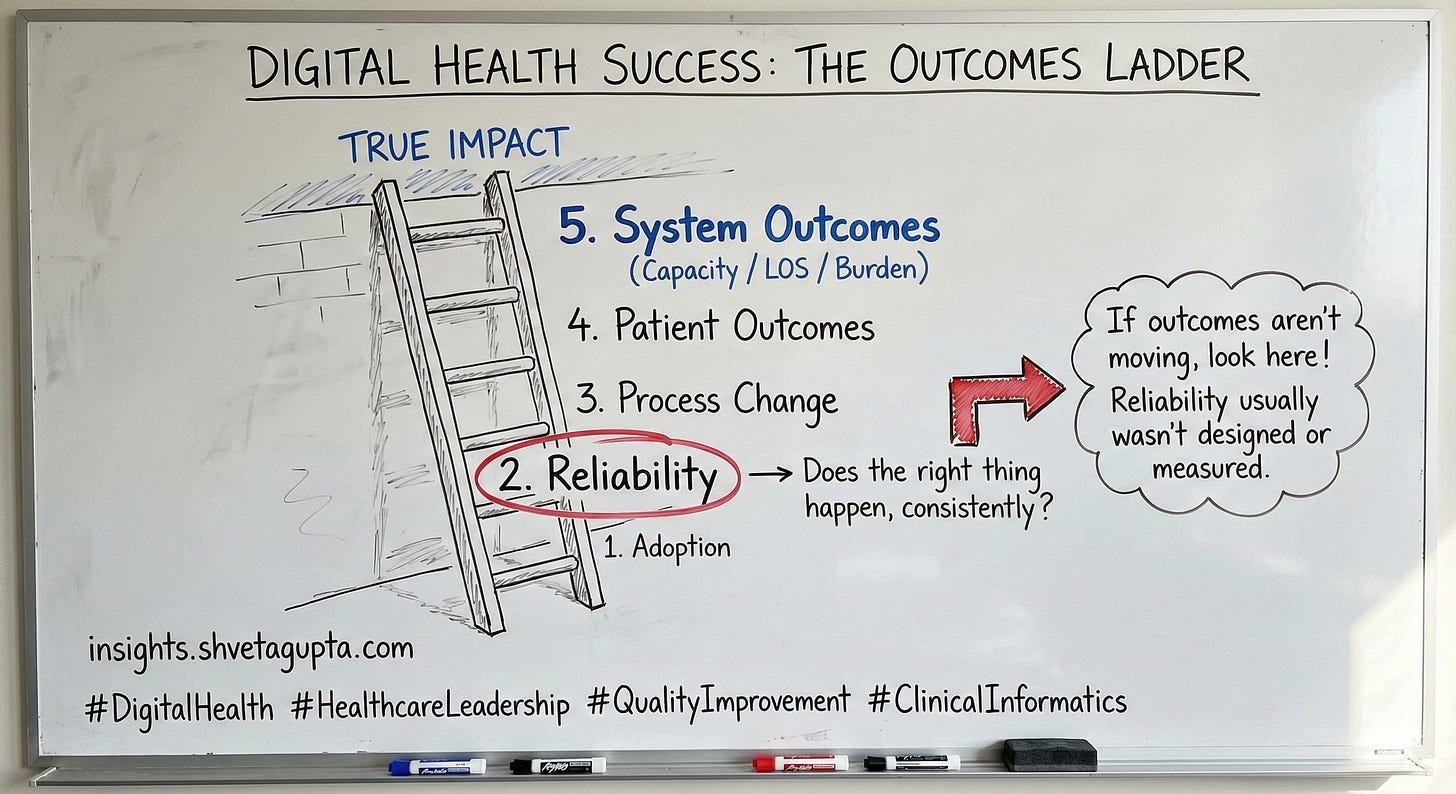

The Digital Health Outcomes Ladder

The Outcomes Ladder: adoption → impact

Moving beyond adoption to measurable impact

Happy Tuesday. This week’s note focuses on digital health, quality, and strategy—a practical framework you can use to define success, align stakeholders, and measure impact beyond “usage.”

The idea in 30 seconds

Many digital initiatives don’t fail—they stall at the adoption stage. If you don’t define reliability and downstream outcomes, you’ll end up reporting on activity rather than impact.

The framework

The Outcomes Ladder (5 rungs):

Adoption — Who uses it, when, and where?

Reliability — Does the right action happen consistently in the real workflow?

Process change — Are key steps happening earlier/better (timing, escalation, decision quality)?

Patient outcomes — Are harm, complications, symptoms, or readmissions improving?

System outcomes — Are capacity, LOS, cost, and staff burden improving?

Rule of thumb: If reliability isn’t defined, outcomes won’t move.

A quick example

A tool achieved strong adoption, but clinicians bypassed it during peak hours because it didn’t fit the workflow. Outcomes didn’t change until the team fixed reliability (timing, defaults, and a clear escalation path).

How to measure it

Pick one metric per rung (keep it small):

Adoption: % eligible encounters where used

Reliability: % eligible encounters where intended action occurs within the target time

Process: time-to-order / time-to-escalation

Patient outcome: complication rate/readmission / symptom control proxy

System: LOS, bed-days saved, staff time/burden proxy

One action for this week

Choose one initiative and write a one-page Outcomes Ladder. If you can’t clearly define reliability, start there.