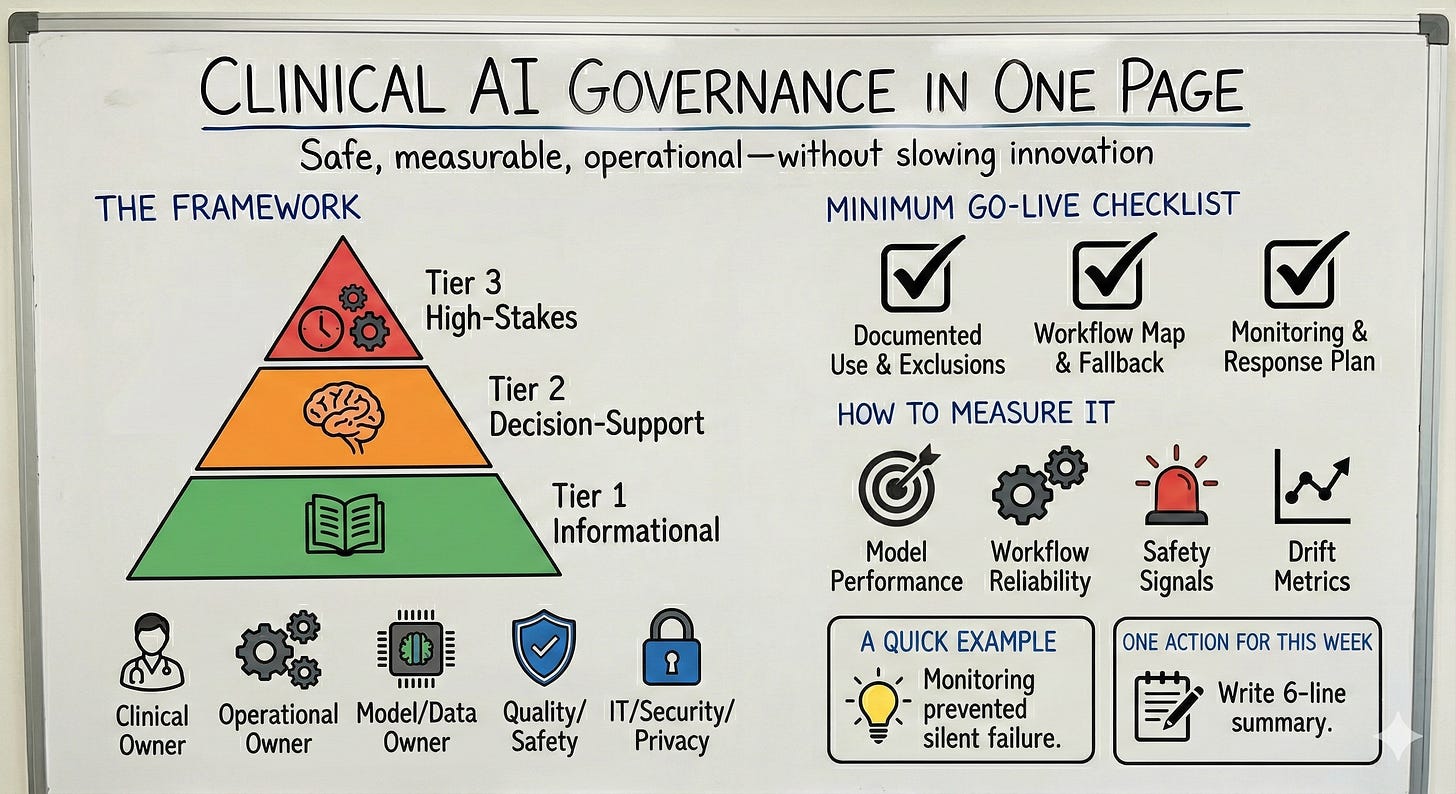

Clinical AI Governance in One Page

Safe, measurable, operational—without slowing innovation

Happy Tuesday. This week’s note offers a one-page governance model that makes clinical AI safer and easier to operationalize—clear decision rights, risk tiers, and monitoring.

The idea in 30 seconds

AI doesn’t need more committees. It needs clarity: who owns it, how risk is tiered, what gets monitored, and what happens when it fails.

The framework

Risk tiering (simple):

Tier 1 (Informational): no direct clinical action

Tier 2 (Decision-support): influences actions

Tier 3 (High-stakes): time-sensitive / high harm potential/automation

Owners (explicit):

Clinical owner (intent + safe use)

Operational owner (workflow + adoption)

Model/data owner (performance + drift)

Quality/safety (incident review)

IT/security/privacy (access + audit)

Minimum go-live checklist:

Intended use + exclusions documented

Workflow map + fallback plan

Monitoring plan (metrics + cadence + reviewer)

Incident response (escalation + “pause/kill switch” criteria)

A quick example

A model “worked” until the underlying workflow changed and performance drifted. A basic monitoring cadence + clear ownership prevented silent failure and restored trust quickly.

How to measure it

Choose what matches your use case:

Model performance (calibration / PPV / sensitivity)

Workflow reliability (% intended action occurs)

Safety signals (overrides, incidents, near-misses)

Drift metrics (performance over time; subgroup checks when applicable)

One action for this week

For any AI initiative, write a 6-line summary: purpose, population, exclusions, tier, primary metric, and monitoring cadence.